10. Markov Decision Process

Until now we have seen how a robot move or locate itself.

It is important now to cover how a robot can plan its mission.

We will now cover probabilistic algorithms.

10.1. Motivation

We can take a few examples:

A robotic manipulator grasp and assembles parts arriving in random configuration on a conveyor belt.

The parts that arrive are unknown.

The optimal strategy requires to know the future parts.

A vehicle to move from point A to point B in Toronto.

Shall it take the shortest path through downtown, but losing the GPS.

Or shall it takes the longest path, but keeping the GPS.

Robots need to map an area collectively.

Should they seek each other?

Or should they cover more unknown area alone?

In robotics we need to consider two types of uncertainties:

Uncertainty of the actions.

Uncertainty on the measurement.

Activity

How would you handle these two uncertainties?

10.2. Markov Decision Process

We will star by tackling the problem of the uncertainty on the actions.

Markov Decision Process or called MDP is a mathematical framework that allows us to consider stochastic environment.

10.2.1. The Idea

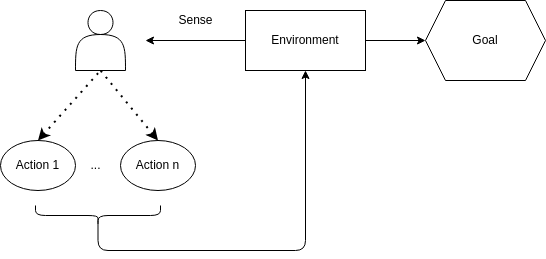

MDPs can be represented graphically as follows:

We have an agent, in our case a robot.

These agents evolve in an environment.

The agent can interact with this environment with different actions.

When the agent uses an action it changes the state of the environment and it gives it a reward.

Activity

Consider a robot that needs to navigate in a maze.

Try to answer the following questions:

What would be the environment?

What would be the actions?

What would be the state?

10.2.2. Formally

We need to define MDPs formally.

Definition

A MDP is defined with a tuple \((S,A,T,R)\):

\(S\) is the state space that contains the set of states \(s\in S\).

\(A\) is the action space that contains the set of actions \(a\in A\).

\(T: S\times A\times S\) is the transition function that defines the probability \(p(s_{t+1}=s'|s_{t}=s,a_{t})\).

\(R: S\times A \rightarrow \mathbb{R}\) is the reward function that defines \(r(s,a)\).

Activity

Remember the problem of detecting Alan in the hallway?

Alan can only move forward.

At each time step, Alan can ever overshoot by 1 cell with a probability of 10%.

Alan can ever undershoot by 1 cell with a probability of 10%.

Now we want to model Alan moving in the hallway as an MDP:

Define the state space.

Define the action space.

Define the transition function.

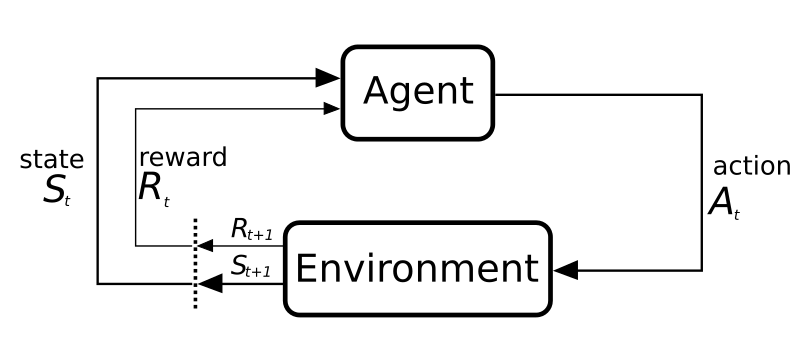

At each time step \(t\), the environment is in a state \(s_t\).

Based on this state, the agent must select an action \(a_t \in A\).

After executing the action

the environment is in a new state \(s_{t+1}\),

and the agent receives a reward \(r_t\).

In the end, they generate a sequence of states and actions:

This sequence is called a trajectory.

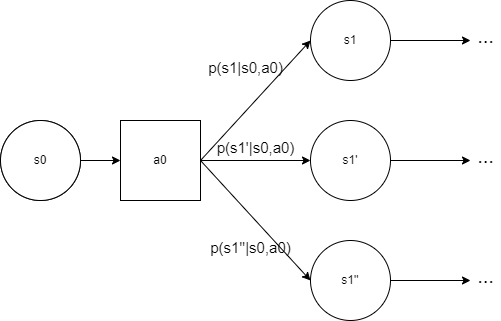

After executing \(a_t\) in \(s_t\), a new state \(s_{t+1}\) is generated.

The new state \(s_{t+1}\) is generated following a discrete probability distribution:

This conditional probability is the transition function of the MDP.

Note

Remind that for all state \(s\in S\) and actions \(a\in A\) we have:

If you look at the transition function, we can note something.

The probability of \(s'\) depends only on state \(s\) and action \(a\).

We call this the Markov property.

Definition

All information about past interactions necessary to predict the future state must be contained in the current state.

Activity

Try to explain why this is important for an MDP.

Finally, the reward for doing an action \(a_t\) in \(s_t\) is given by a function \(r(s,a)\).

10.2.3. Defining goals and rewards

We use robots for something, usually we want to solve a problem.

To solve a problem you have an objective, a goal.

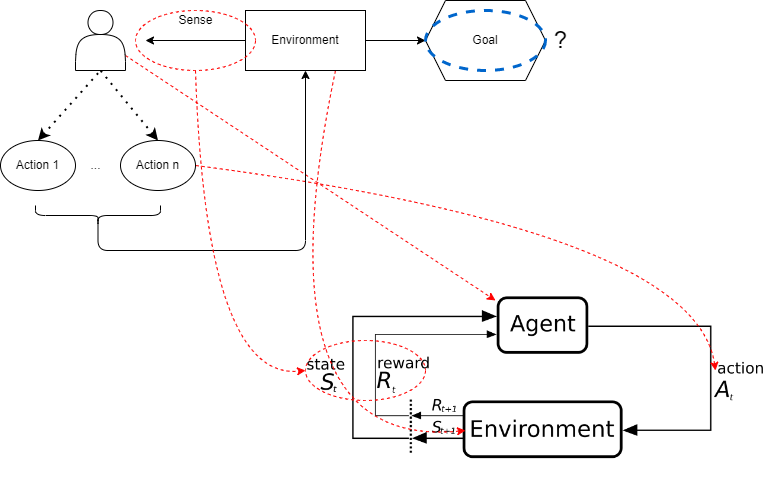

In MDPs the goal is not directly defined.

You can verify in the MDP definition, the goal is mentioned nowhere.

Instead of defining the goal directly, we use the reward function to do it.

After each action \(a\), the environment send to the agent a reward \(r\in \mathbb{R}\).

So the goal for the agent is to maximize the long term cumulated reward.

Consequently, you need to design a reward function that leads to the concrete goal.

It can seem limiting, but it’s very flexible in practice.

Example

Consider a robot in a maze.

It needs to learn how to escape quickly.

The most common practice is to give a reward of \(-1\) per action executed until the robot escape.

Activity

Why do you think this reward function works well to achieve the goal?

Discuss another possible reward function.

The agent will maximize the cumulated reward, so we must provide a reward function that if maximized lead to the goal we want.

Activity

What reward function would you use for TicTacToe?

Consider a game of chess; what do you think about giving rewards for taking the opponent’s pieces?

Important

The reward must communicate what the agent needs to achieve not how to achieve it.